STREAMING DATA PIPELINE

Deliver Real-Time Insights with Qlik's Streaming Data Pipeline

Build real-time streaming data pipelines that power instant analytics. Stream, transform, and deliver data continuously to enable live dashboards and smarter, faster business decisions.

How does Qlik's streaming data pipeline work?

Step 1 - Connect and stream data from any source in real time

Step 2 - Cleanse, enrich, and transform data as it flows

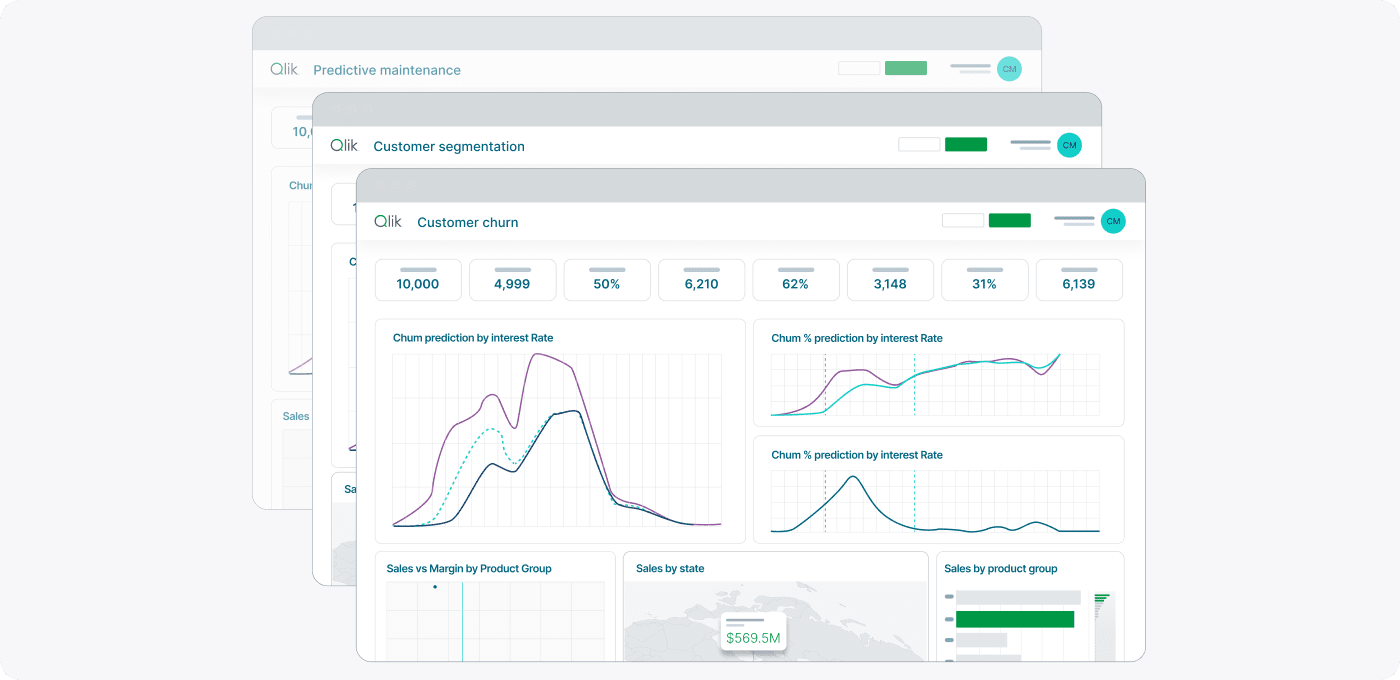

Step 3 - Analyze and visualize streaming data instantly

Step 4 - Deliver continuous updates to analytics and applications

Why choose Qlik for streaming data pipelines?

Streaming infrastructure with built-in governance

Maintain compliance and data trust in streaming scenarios with lineage tracking, quality monitoring, and policy enforcement that operate on data in motion without impacting latency.

Stream data anywhere in your infrastructure

Deploy streaming pipelines across public clouds, private data centers, and edge locations with consistent capabilities and centralized management regardless of deployment location.

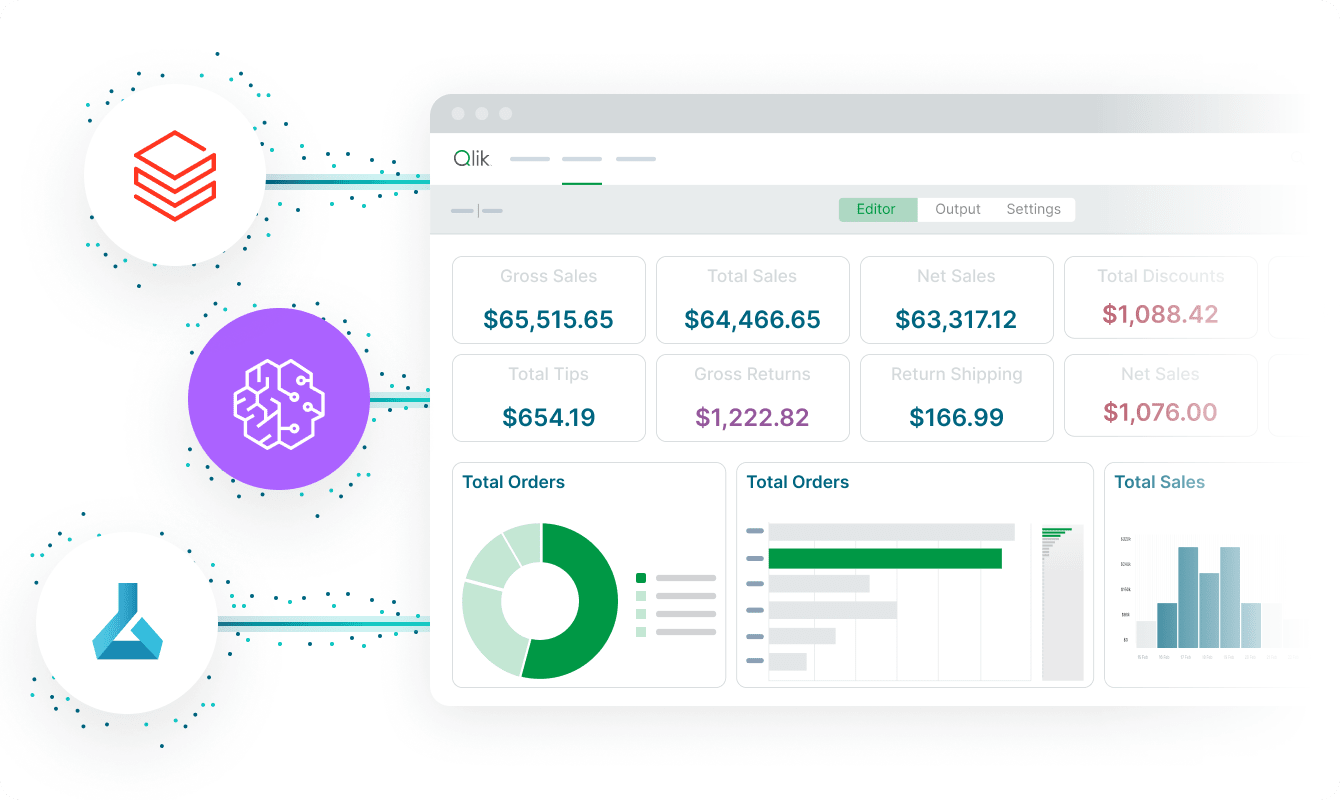

Visual development for streaming workflows

Build streaming pipelines through drag-and-drop interfaces that simplify complex stream processing, while providing scripting options for advanced transformations and custom logic.

Enterprise-scale streaming with sub-second latency

Process millions of events per second with distributed stream processing that maintains consistent low latency even during traffic spikes through intelligent load distribution and auto-scaling.

Trusted for mission-critical streaming analytics

Join organizations that monitor operations, detect fraud, and optimize processes in real time using Qlik's streaming pipelines to deliver instant insights from continuous data flows.

Trusted by leading enterprises worldwide

What our customers say

Connect to 500+ data sources with Qlik’s analytics integrations

Resources

Streaming data pipeline FAQs

Streaming processes data continuously as it arrives rather than collecting and processing in periodic batches, enabling immediate insights and actions based on current data instead of delayed batch results.

Yes, elastic scaling automatically adds processing capacity during traffic spikes while backpressure handling prevents overwhelming downstream systems, maintaining reliable operation during demand variation.

Checkpoint recovery and automatic reconnection ensure pipelines resume from the last processed position without data loss, maintaining exactly-once processing semantics during disruptions.

In-flight validation applies quality rules to streaming data in real time, with options to quarantine bad records, generate alerts, or apply automated corrections without stopping the stream.