DATA PIPELINE SOLUTIONS

Build Efficient, Automated Data Flows with Qlik's Data Pipeline Solutions

Streamline your data flow with comprehensive data pipeline solutions. Automate data collection, transformation, and delivery to power analytics and business intelligence across your organization.

How Qlik's Data Pipeline Solutions work

Step 1 - Connect to multiple structured and unstructured sources

Step 2 - Cleanse, transform, and enrich data automatically

Step 3 - Load and deliver data to analytics and cloud systems

Step 4 - Monitor, optimize, and scale your pipelines

Why choose Qlik for data pipeline automation?

Handle any data pattern with one platform

Process both batch and streaming data through unified pipelines that apply consistent transformation logic, governance policies, and quality controls regardless of data arrival patterns.

Deploy pipelines anywhere in your infrastructure

Run pipelines on public clouds, private data centers, or hybrid environments with consistent capabilities and centralized management across all deployment locations.

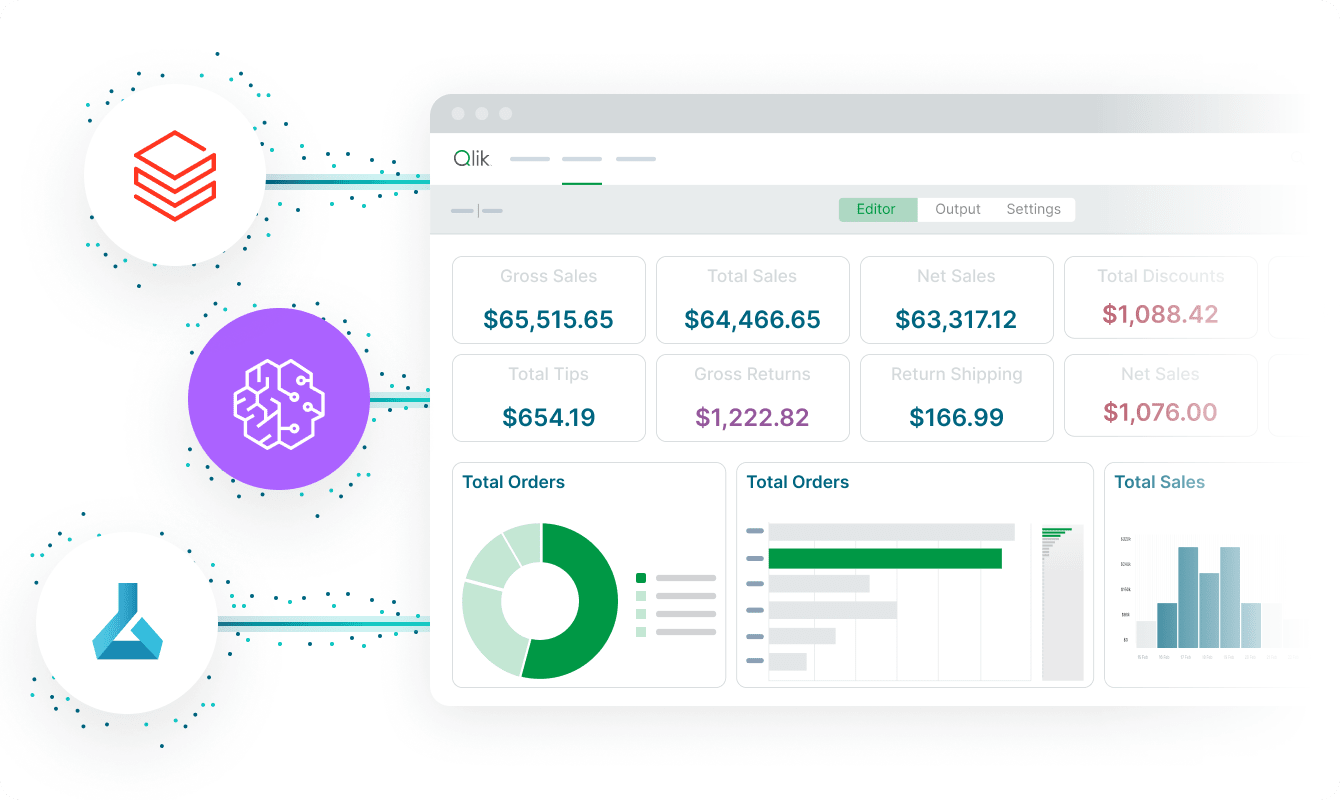

Visibility and control across all pipelines

Track pipeline performance, data quality, and processing status through unified dashboards while enforcing governance policies and security controls automatically.

Visual development that accelerates pipeline creation

Build pipelines through drag-and-drop interfaces that require minimal coding while providing scripting options for advanced transformations when needed.

Reliable pipelines for mission-critical workflows

Join organizations that process petabytes through Qlik's pipelines, supporting analytics, operational reporting, and AI applications with enterprise-grade reliability.

Trusted by leading enterprises worldwide

What our customers say

Connect to 500+ data sources with Qlik’s analytics integrations

Resources

Data Pipeline Solutions FAQs

Automated pipelines use visual development, AI-assisted mapping, and intelligent orchestration to eliminate manual coding while maintaining flexibility, reducing development time and maintenance overhead significantly.

Yes, unified pipelines support batch processing and streaming data through the same platform, allowing you to apply consistent transformation logic regardless of data arrival patterns.

Comprehensive dashboards track execution times, resource utilization, and data quality metrics, enabling proactive optimization and rapid troubleshooting when performance issues occur.

Automated error handling includes retry logic, checkpoint recovery, and detailed logging that enables rapid diagnosis while preventing data loss during pipeline failures.