How big data analytics works

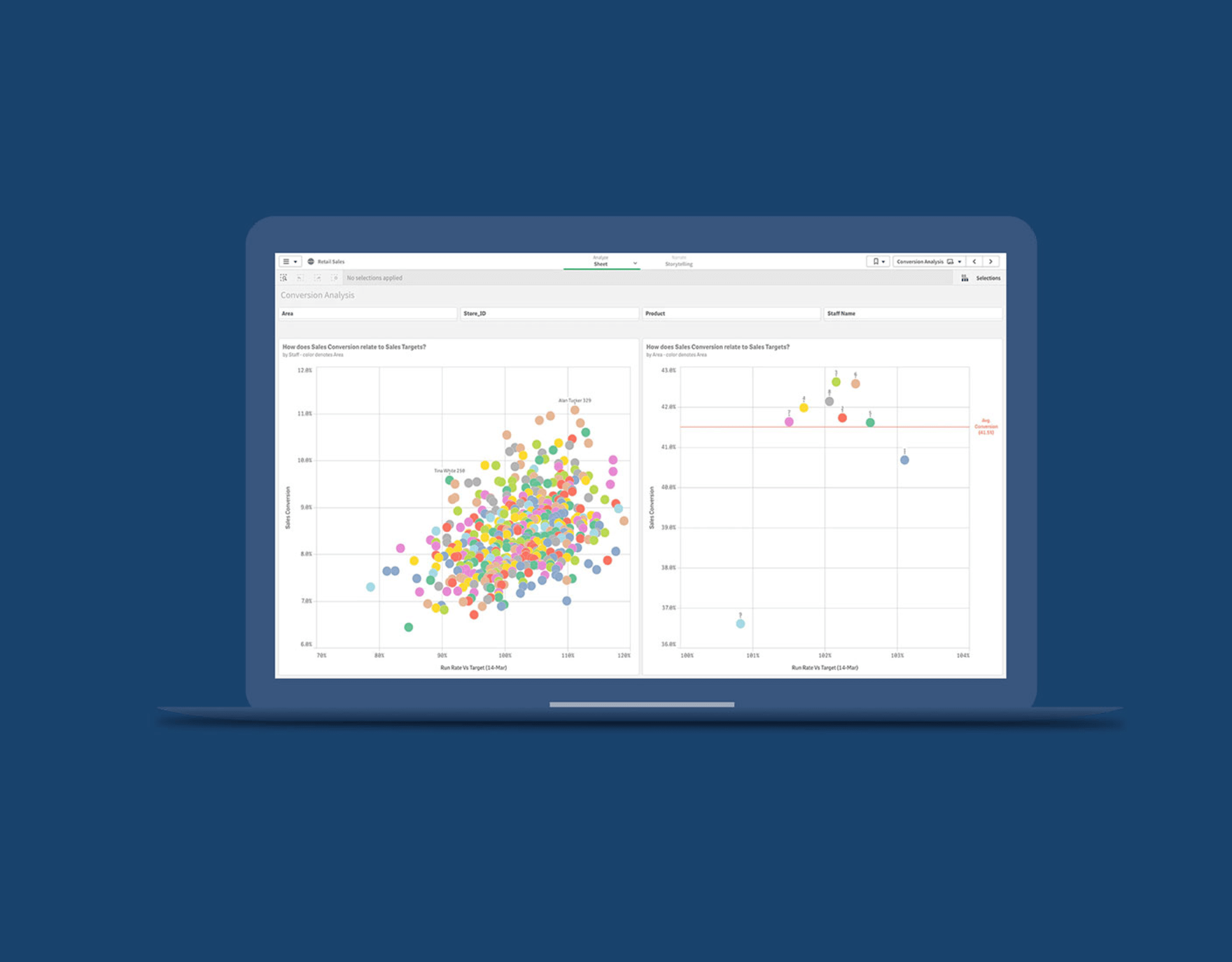

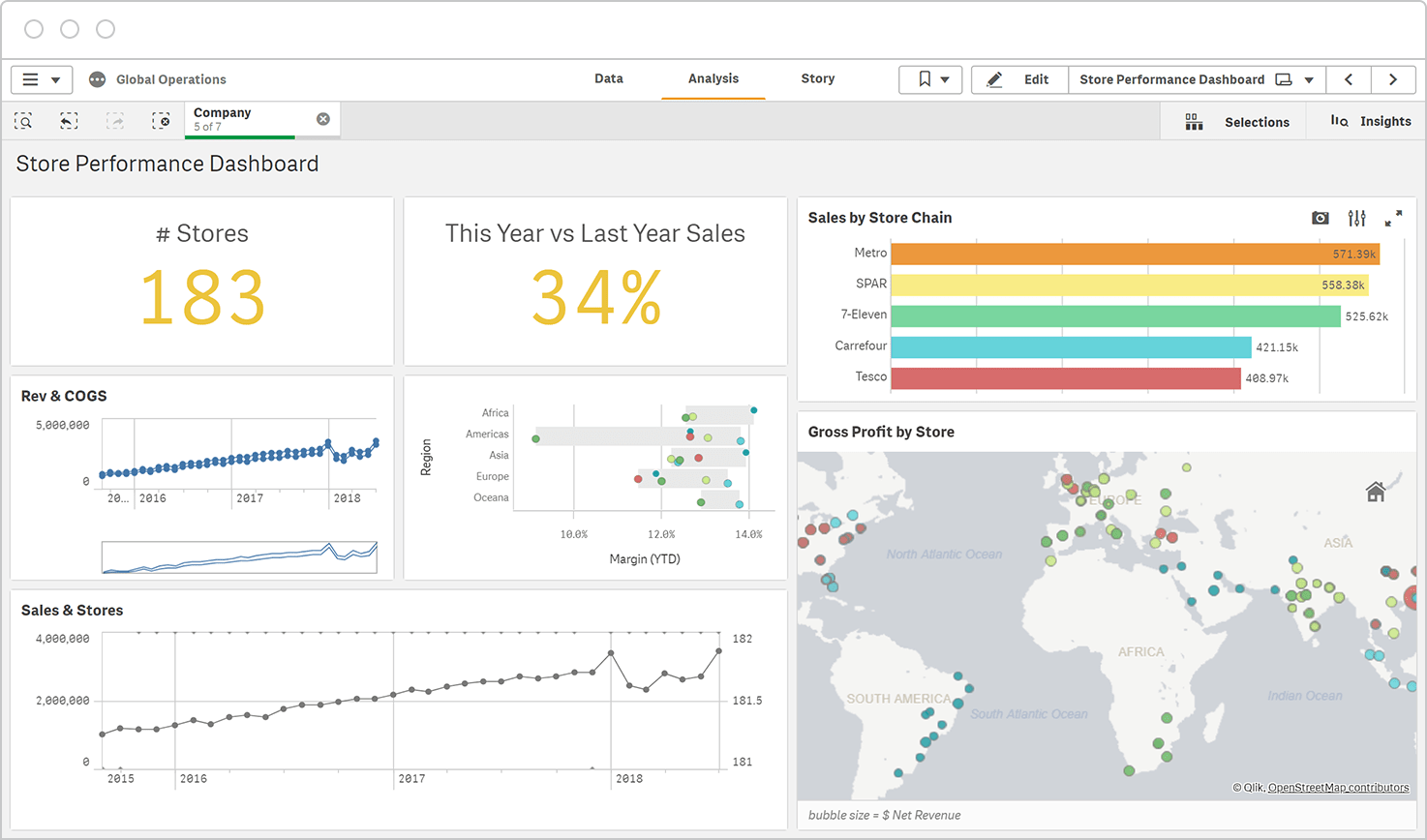

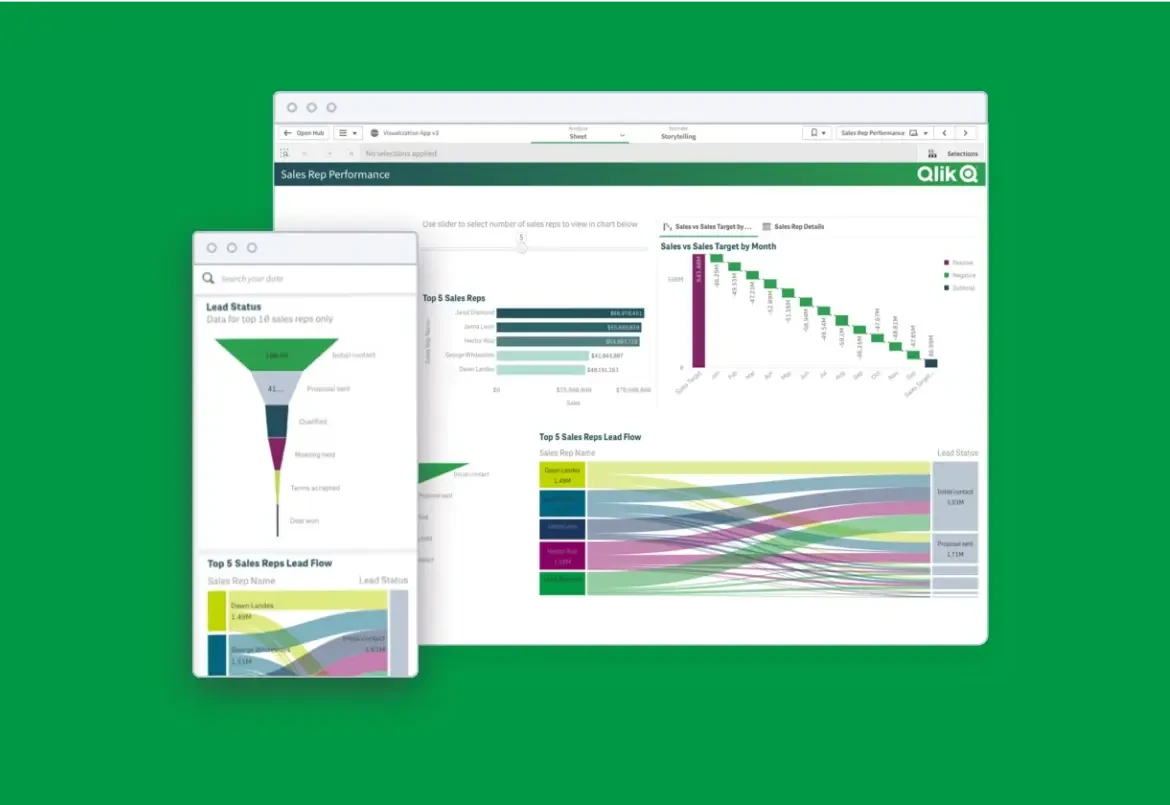

The primary steps of big data analytics are goal definition, data collection, data integration and management, data analysis and sharing of findings. The advanced analytics involved in exploring and analyzing large volumes of semi-structured and unstructured data requires either an end-to-end big data analytics platform or a broad set of tools which are applied by data analysts, data scientists, or engineers.

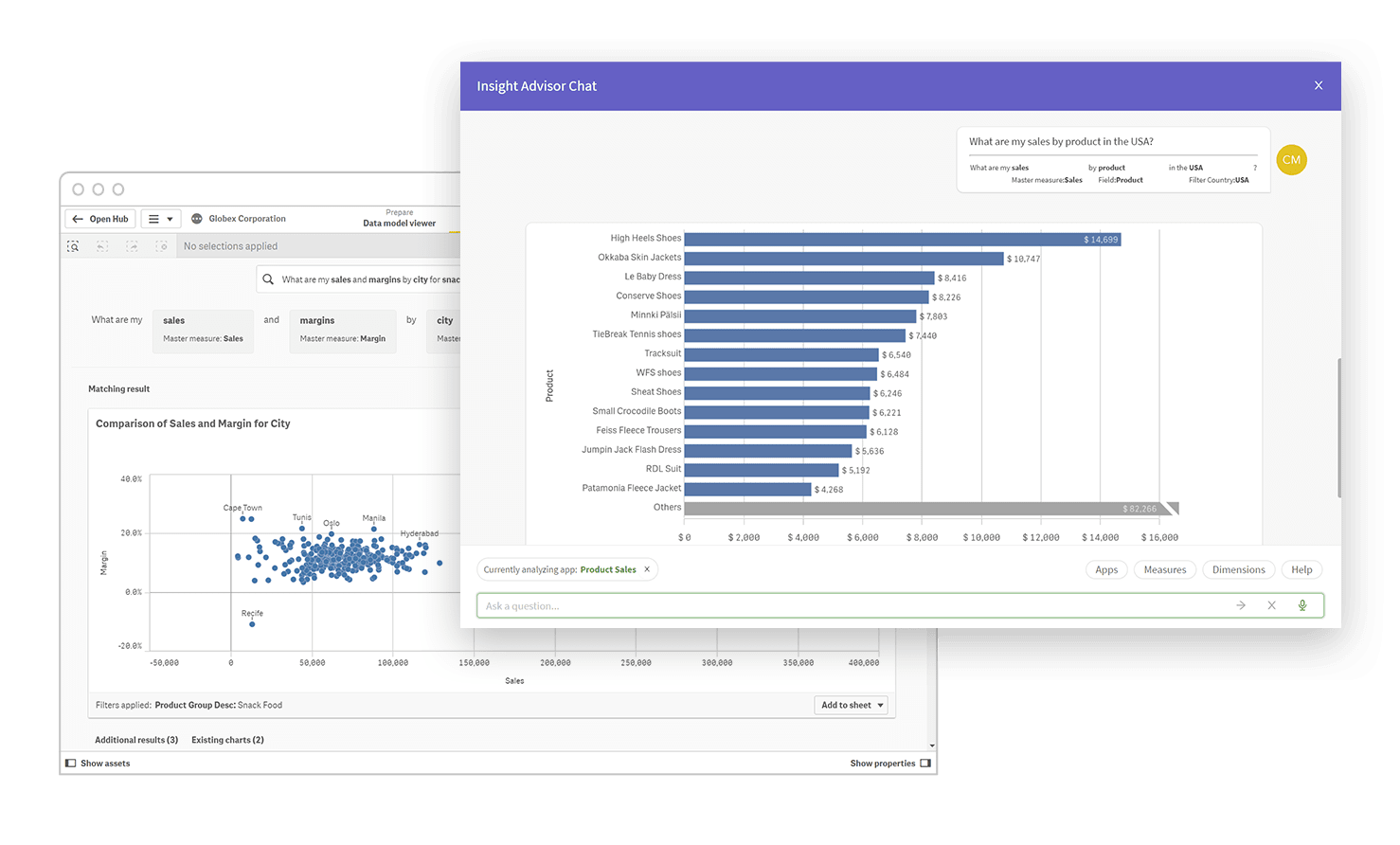

Modern big data analytics involves the use of artificial intelligence (AI) and machine learning to automate processes, provide insight suggestions, perform predictive analytics and allow natural language interaction. Real-time big data analytics involves processing data as it arrives, which can further speed decision making or trigger actions or notifications.

Now let’s get more specific.

Once the data has been collected and you’ve clearly defined your business objective (such as improving marketing ROI), below are the key steps and processes involved:

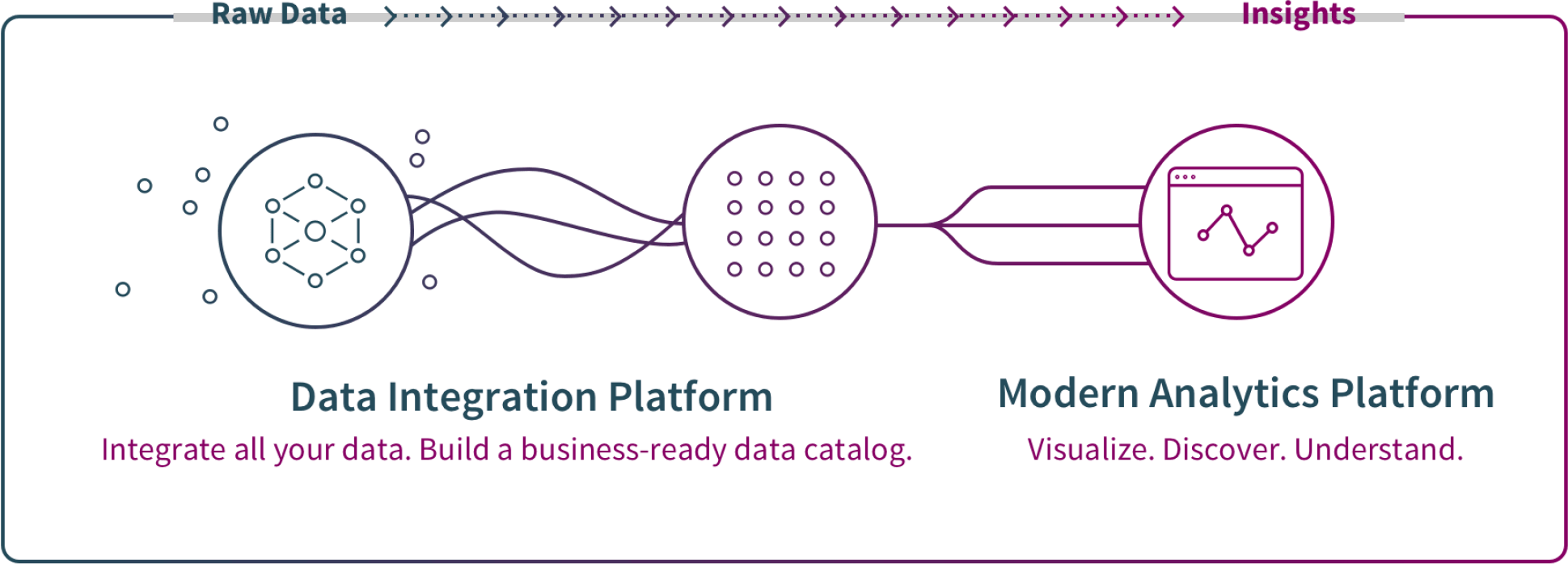

1. Big data integration and management

Before conducting big data analytics, source data must be transformed into clean, business-ready information. Big data integration is the process of combining data from many sources across an organization to provide complete, accurate, and up-to-date information for big data analytics usage. As described below, big data replication, ingestion, consolidation and storage bring different types of data into standardized formats stored in a repository such as a data lake or data warehouse.

Big data replication

Big data analytics requires fast data access, high performance, and having an accurate backup of the data. To make this happen, the process of data replication copies data from master sources to one or more locations. This process can even happen in real time as data is written, changed, or deleted by using change data capture (CDC) technology.

Big data ingestion

Raw data from a variety of sources needs to be moved to a storage location such as a data warehouse or data lake. This process, called big data ingestion, can be streamed in real time or in batches. Ingestion also usually includes cleaning and standardizing the data to make it ready for a big data analytics tool.

Big data consolidation and storage

For big data analytics, data is stored in a data lake or data warehouse. The Hadoop data lake open source software framework is now popular because the framework is free and its distributed computing model can quickly process big data.

Governed big data

Big data analytics tools should also provide a governed enterprise data catalog. This allows IT to profile and document every data source and define who in the organization can take which actions on which data. This allows users to more easily find, use and share trusted data sets on their own.